poll和epoll的使用应该不用再多说了。当fd很多时,使用epoll比poll效率更高。我们通过内核源码分析来看看到底是为什么。

poll剖析poll系统调用:

int poll(struct pollfd *fds, nfds_t nfds, int timeout);

对应的实现代码为:

[fs/select.c -->sys_poll]\n asmlinkage long sys_poll(struct pollfd __user * ufds, unsigned int nfds, long timeout)\n{\n struct poll_wqueues table;\nint fdcount, err;\n unsigned int i;\nstruct poll_list *head;\nstruct poll_list *walk;\n\n/* Do a sanity check on nfds ... */ /* 用户给的nfds数不可以超过一个struct file结构支持\n的最大fd数(默认是256)*/\n if (nfds > current->files->max_fdset && nfds > OPEN_MAX)\n return -EINVAL;\n\n if (timeout) {\n /* Careful about overflow in the intermediate values */\n if ((unsigned long) timeout < MAX_SCHEDULE_TIMEOUT / HZ)\ntimeout = (unsigned long)(timeout*HZ+999)/1000+1;\n else /* Negative or overflow */\n timeout = MAX_SCHEDULE_TIMEOUT;\n }\n\n poll_initwait(&table);

其中poll_initwait较为关键,从字面上看,应该是初始化变量table,注意此处table在整个执行poll的过程中是很关键的变量。而struct poll_table其实就只包含了一个函数指针:

[fs/poll.h]\n /*\n * structures and helpers for f_op->poll implementations\n */\n typedef void (*poll_queue_proc)(struct file *, wait_queue_head_t *, struct\npoll_table_struct *);\n\n typedef struct poll_table_struct {\npoll_queue_proc qproc;\n } \npoll_table;

现在我们来看看poll_initwait到底在做些什么

[fs/select.c]\n void __pollwait(struct file *filp, wait_queue_head_t *wait_address, poll_table *p);\n\n void poll_initwait(struct poll_wqueues *pwq)\n {\n &(pwq->pt)->qproc = __pollwait; /*此行已经被我“翻译”了,方便观看*/\npwq->error = 0;\n pwq->table = NULL;\n }

需要C/C++ Linux服务器架构师学习资料私信“资料”(资料包括C/C++,Linux,golang技术,Nginx,ZeroMQ,MySQL,Redis,fastdfs,MongoDB,ZK,流媒体,CDN,P2P,K8S,Docker,TCP/IP,协程,DPDK,ffmpeg等),免费分享

很明显,poll_initwait的主要动作就是把table变量的成员poll_table对应的回调函数置__pollwait。这个__pollwait不仅是poll系统调用需要,select系统调用也一样是用这个__pollwait,说白了,这是个操作系统的异步操作的“御用”回调函数。当然了,epoll没有用这个,它另外新增了一个回调函数,以达到其高效运转的目的,这是后话,暂且不表。我们先不讨论__pollwait的具体实现,还是继续看sys_poll:

[fs/select.c -->sys_poll]\n head = NULL;\n walk = NULL;\n i = nfds;\n err = -ENOMEM;\nwhile(i!=0) {\n struct poll_list *pp;\n pp = kmalloc(sizeof(struct poll_list)+\n sizeof(struct pollfd)*\n (i>POLLFD_PER_PAGE?POLLFD_PER_PAGE:i),\n GFP_KERNEL);\n if(pp==NULL)\n goto out_fds;\n pp->next=NULL;\npp->len = (i>POLLFD_PER_PAGE?POLLFD_PER_PAGE:i);\n if (head == NULL)\n head = pp;\n else\n walk->next = pp;\n\nwalk = pp;\nif (copy_from_user(pp->entries, ufds + nfds-i,\n sizeof(struct pollfd)*pp->len)) {\n err = -EFAULT;\n goto out_fds;\n }\n i -= pp->len;\n }\nfdcount = do_poll(nfds, head, &table, timeout);

这一大堆代码就是建立一个链表,每个链表的节点是一个page大小(通常是4k),这链表节点由一个指向struct poll_list的指针掌控,而众多的struct pollfd就通过struct_list的entries成员访问。上面的循环就是把用户态的struct pollfd拷进这些entries里。通常用户程序的poll调用就监控几个fd,所以上面这个链表通常也就只需要一个节点,即操作系统的一页。但是,当用户传入的fd很多时,由于poll系统调用每次都要把所有struct pollfd拷进内核,所以参数传递和页分配此时就成了poll系统调用的性能瓶颈。最后一句do_poll,我们跟进去:

[fs/select.c-->sys_poll()-->do_poll()]\n static void do_pollfd(unsigned int num, struct pollfd * fdpage,\n poll_table ** pwait, int *count)\n {\n int i;\n\n for (i = 0; i < num; i++) {\n int fd;\n unsigned int mask;\n struct pollfd *fdp;\n\n mask = 0;\n fdp = fdpage+i;\n fd = fdp->fd;\n if (fd >= 0) {\n struct file * file = fget(fd);\n mask = POLLNVAL;\n if (file != NULL) {\n mask = DEFAULT_POLLMASK;\n if (file->f_op && file->f_op->poll)\n mask = file->f_op->poll(file, *pwait);\n mask &= fdp->events | POLLERR | POLLHUP;\nfput(file);\n }\n if (mask) {\n *pwait = NULL;\n (*count)++;\n }\n}\n fdp->revents = mask;\n }\n }\n\n static int do_poll(unsigned int nfds, struct poll_list *list,\n struct poll_wqueues *wait, long timeout)\n {\n int count = 0;\n poll_table* pt = &wait->pt;\n\n if (!timeout)\n pt = NULL;\n\n for (;;) {\n struct poll_list *walk;\nset_current_state(TASK_INTERRUPTIBLE);\n walk = list;\n while(walk != NULL) {\n do_pollfd( walk->len, walk->entries, &pt, &count);\nwalk = walk->next;\n}\n pt = NULL;\nif (count || !timeout || signal_pending(current))\nbreak;\ncount = wait->error;\n if (count)\n break;\ntimeout = schedule_timeout(timeout); /* 让current挂起,别的进程跑,timeout到了\n以后再回来运行current*/\n}\n __set_current_state(TASK_RUNNING);\nreturn count;\n }

注意set_current_state和signal_pending,它们两句保障了当用户程序在调用poll后挂起时,发信号可以让程序迅速推出poll调用,而通常的系统调用是不会被信号打断的。纵览do_poll函数,主要是在循环内等待,直到count大于0才跳出循环,而count主要是靠do_pollfd函数处理。注意这段代码:

while(walk != NULL) {\n do_pollfd( walk->len, walk->entries, &pt, &count);\n walk = walk->next;\n }

当用户传入的fd很多时(比如1000个),对do_pollfd就会调用很多次,poll效率瓶颈的另一原因就在这里。do_pollfd就是针对每个传进来的fd,调用它们各自对应的poll函数,简化一下调用过程,如下:

struct file* file = fget(fd);\nfile->f_op->poll(file, &(table->pt));

如果fd对应的是某个socket,do_pollfd调用的就是网络设备驱动实现的poll;如果fd对应的是某个ext3文件系统上的一个打开文件,那do_pollfd调用的就是ext3文件系统驱动实现的poll。一句话,这个file->f_op->poll是设备驱动程序实现的,那设备驱动程序的poll实现通常又是什么样子呢?其实,设备驱动程序的标准实现是:调用poll_wait,即以设备自己的等待队列为参数(通常设备都有自己的等待队列,不然一个不支持异步操作的设备会让人很郁闷)调用struct poll_table的回调函数。作为驱动程序的代表,我们看看socket在使用tcp时的代码:

[net/ipv4/tcp.c-->tcp_poll]\nunsigned int tcp_poll(struct file *file, struct socket *sock, poll_table *wait)\n{\n unsigned int mask;\n struct sock *sk = sock->sk;\nstruct tcp_opt *tp = tcp_sk(sk);\n\n poll_wait(file, sk->sk_sleep, wait);

代码就看这些,剩下的无非就是判断状态、返回状态值,tcp_poll的核心实现就是poll_wait,而poll_wait就是调用struct poll_table对应的回调函数,那poll系统调用对应的回调函数就是__poll_wait,所以这里几乎就可以把tcp_poll理解为一个语句:

__poll_wait(file, sk->sk_sleep, wait);

由此也可以看出,每个socket自己都带有一个等待队列sk_sleep,所以上面我们所说的“设备的等待队列”其实不止一个。这时候我们再看看__poll_wait的实现:

[fs/select.c-->__poll_wait()]\n void __pollwait(struct file *filp, wait_queue_head_t *wait_address, poll_table *_p)\n{\n struct poll_wqueues *p = container_of(_p, struct poll_wqueues, pt);\n struct poll_table_page *table = p->table;\n\n if (!table || POLL_TABLE_FULL(table)) {\n struct poll_table_page *new_table;\n\n new_table = (struct poll_table_page *) __get_free_page(GFP_KERNEL);\n if (!new_table) {\n p->error = -ENOMEM;\n __set_current_state(TASK_RUNNING);\n return;\n}\n new_table->entry = new_table->entries;\n new_table->next = table;\n p->table = new_table;\n table = new_table;\n }\n\n /* Add a new entry */\n {\n struct poll_table_entry * entry = table->entry;\n table->entry = entry+1;\nget_file(filp);\n entry->filp = filp;\nentry->wait_address = wait_address;\n init_waitqueue_entry(&entry->wait, current);\n add_wait_queue(wait_address,&entry->wait);\n }\n}

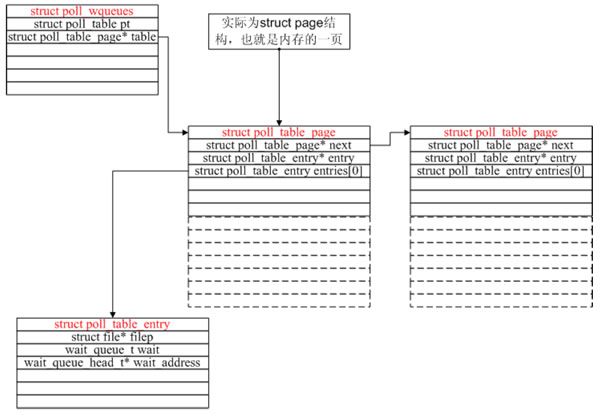

__poll_wait的作用就是创建了上图所示的数据结构(一次__poll_wait即一次设备poll调用只创建一个poll_table_entry),并通过struct poll_table_entry的wait成员,把current挂在了设备的等待队列上,此处的等待队列是wait_address,对应tcp_poll里的sk->sk_sleep。现在我们可以回顾一下poll系统调用的原理了:先注册回调函数__poll_wait,再初始化table变量(类型为struct poll_wqueues),接着拷贝用户传入的struct pollfd(其实主要是fd),然后轮流调用所有fd对应的poll(把current挂到各个fd对应的设备等待队列上)。在设备收到一条消息(网络设备)或填写完文件数据(磁盘设备)后,会唤醒设备等待队列上的进程,这时current便被唤醒了。current醒来后离开sys_poll的操作相对简单,这里就不逐行分析了。

epoll

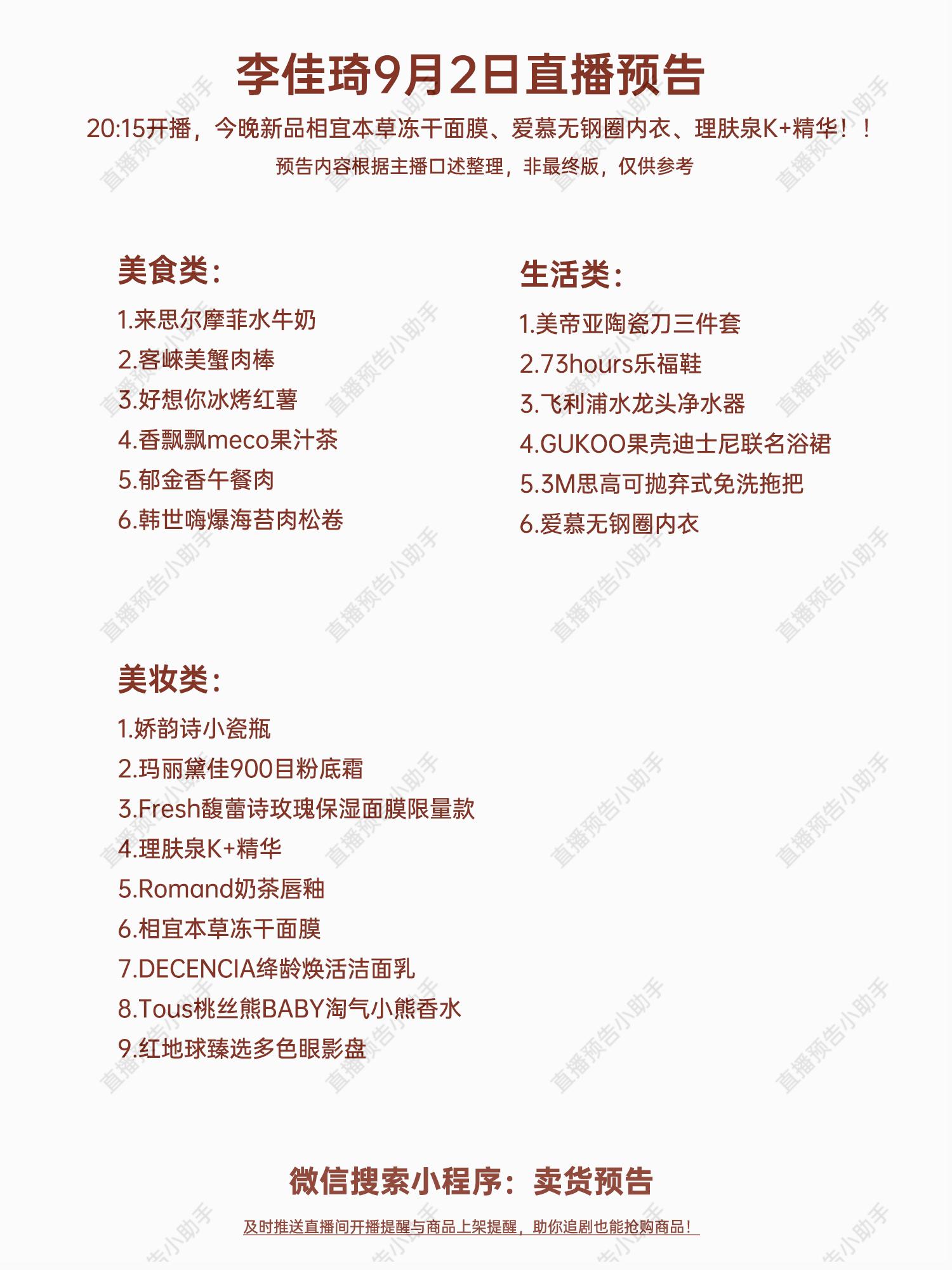

通过上面的分析,poll运行效率的两个瓶颈已经找出,现在的问题是怎么改进。首先,每次poll都要把1000个fd 拷入内核,太不科学了,内核干嘛不自己保存已经拷入的fd呢?答对了,epoll就是自己保存拷入的fd,它的API就已经说明了这一点——不是 epoll_wait的时候才传入fd,而是通过epoll_ctl把所有fd传入内核再一起wait,这就省掉了不必要的重复拷贝。其次,在 epoll_wait时,也不是把current轮流的加入fd对应的设备等待队列,而是在设备等待队列醒来时调用一个回调函数(当然,这就需要“唤醒回调”机制),把产生事件的fd归入一个链表,然后返回这个链表上的fd。epoll剖析epoll是个module,所以先看看module的入口eventpoll_init

[fs/eventpoll.c-->evetpoll_init()]\n static int __init eventpoll_init(void)\n {\n int error;\n\n init_MUTEX(&epsem);\n\n /* Initialize the structure used to perform safe poll wait head wake ups */\n ep_poll_safewake_init(&psw);\n\n /* Allocates slab cache used to allocate struct epitem items */\n epi_cache = kmem_cache_create(eventpoll_epi, sizeof(struct epitem),\n0, SLAB_HWCACHE_ALIGN|EPI_SLAB_DEBUG|SLAB_PANIC,\n NULL, NULL);\n\n /* Allocates slab cache used to allocate struct eppoll_entry */\n pwq_cache = kmem_cache_create(eventpoll_pwq,\n sizeof(struct eppoll_entry), 0,\n EPI_SLAB_DEBUG|SLAB_PANIC, NULL, NULL);\n\n /*\n * Register the virtual file system that will be the source of inodes\n * for the eventpoll files\n */\n error = register_filesystem(&eventpoll_fs_type);\n if (error)\ngoto epanic;\n\n/* Mount the above commented virtual file system */\n eventpoll_mnt = kern_mount(&eventpoll_fs_type);\n error = PTR_ERR(eventpoll_mnt);\n if (IS_ERR(eventpoll_mnt))\ngoto epanic;\n\nDNPRINTK(3, (KERN_INFO [%p] eventpoll: successfully initialized.\\n,\n current));\nreturn 0;\n\n epanic:\n panic(eventpoll_init() failed\\n);\n }

很有趣,这个module在初始化时注册了一个新的文件系统,叫eventpollfs(在eventpoll_fs_type结构里),然后挂载此文件系统。另外创建两个内核cache(在内核编程中,如果需要频繁分配小块内存,应该创建kmem_cahe来做“内存池”),分别用于存放struct epitem和eppoll_entry。如果以后要开发新的文件系统,可以参考这段代码。现在想想epoll_create为什么会返回一个新的fd?因为它就是在这个叫做eventpollfs的文件系统里创建了一个新文件!如下:

[fs/eventpoll.c-->sys_epoll_create()]\n asmlinkage long sys_epoll_create(int size)\n {\n int error, fd;\n struct inode *inode;\n struct file *file;\n\n DNPRINTK(3, (KERN_INFO [%p] eventpoll: sys_epoll_create(%d)\\n,\n current, size));\n\n /* Sanity check on the size parameter */\n error = -EINVAL;\n if (size <= 0)\n goto eexit_1;\n\n /*\n * Creates all the items needed to setup an eventpoll file. That is,\n* a file structure, and inode and a free file descriptor.\n */\n error = ep_getfd(&fd, &inode, &file);\n if (error)\ngoto eexit_1;\n\n/* Setup the file internal data structure ( struct eventpoll ) */\n error = ep_file_init(file);\n if (error)\n goto eexit_2;

函数很简单,其中ep_getfd看上去是“get”,其实在第一次调用epoll_create时,它是要创建新inode、新的file、新的fd。而ep_file_init则要创建一个struct eventpoll结构,并把它放入file->private_data,注意,这个private_data后面还要用到的。看到这里,也许有人要问了,为什么epoll的开发者不做一个内核的超级大map把用户要创建的epoll句柄存起来,在epoll_create时返回一个指针?那似乎很直观呀。但是,仔细看看,linux的系统调用有多少是返回指针的?你会发现几乎没有!(特此强调,malloc不是系统调用,malloc调用的brk才是)因为linux做为unix的最杰出的继承人,它遵循了unix的一个巨大优点——一切皆文件,输入输出是文件、socket也是文件,一切皆文件意味着使用这个操作系统的程序可以非常简单,因为一切都是文件操作而已!(unix还不是完全做到,plan 9才算)。而且使用文件系统有个好处:epoll_create返回的是一个fd,而不是该死的指针,指针如果指错了,你简直没办法判断,而fd则可以通过current->files->fd_array[]找到其真伪。epoll_create好了,该epoll_ctl了,我们略去判断性的代码:

[fs/eventpoll.c-->sys_epoll_ctl()]\n asmlinkage long\n sys_epoll_ctl(int epfd, int op, int fd, struct epoll_event __user *event)\n {\n int error;\n struct file *file, *tfile;\n struct eventpoll *ep;\n struct epitem *epi;\n struct epoll_event epds;\n....\n epi = ep_find(ep, tfile, fd);\n\nerror = -EINVAL;\nswitch (op) {\ncase EPOLL_CTL_ADD:\nif (!epi) {\n epds.events |= POLLERR | POLLHUP;\n error = ep_insert(ep, &epds, tfile, fd);\n } else\n error = -EEXIST;\n break;\n case EPOLL_CTL_DEL:\n if (epi)\n error = ep_remove(ep, epi);\nelse\nerror = -ENOENT;\n break;\ncase EPOLL_CTL_MOD:\n if (epi) {\n epds.events |= POLLERR | POLLHUP;\n error = ep_modify(ep, epi, &epds);\n } else\nerror = -ENOENT;\n break;\n}

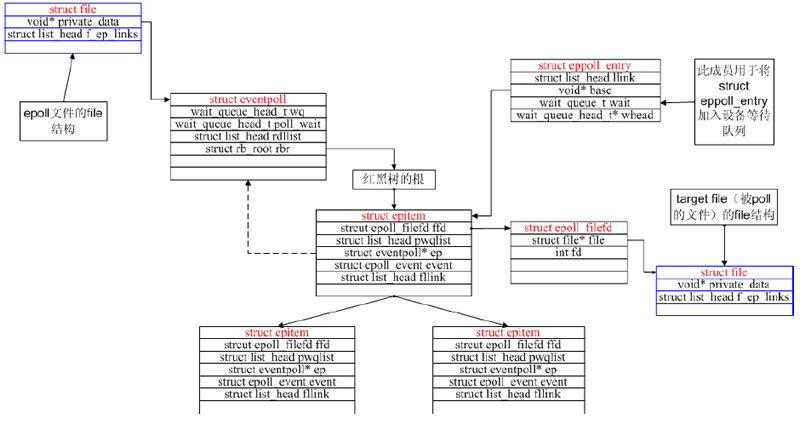

原来就是在一个大的结构(现在先不管是什么大结构)里先ep_find,如果找到了struct epitem而用户操作是ADD,那么返回-EEXIST;如果是DEL,则ep_remove。如果找不到struct epitem而用户操作是ADD,就ep_insert创建并插入一个。很直白。那这个“大结构”是什么呢?看ep_find的调用方式,ep参数应该是指向这个“大结构”的指针,再看ep = file->private_data,我们才明白,原来这个“大结构”就是那个在epoll_create时创建的struct eventpoll,具体再看看ep_find的实现,发现原来是struct eventpoll的rbr成员(struct rb_root),原来这是一个红黑树的根!而红黑树上挂的都是struct epitem。现在清楚了,一个新创建的epoll文件带有一个struct eventpoll结构,这个结构上再挂一个红黑树,而这个红黑树就是每次epoll_ctl时fd存放的地方!现在数据结构都已经清楚了,我们来看最核心的:

[fs/eventpoll.c-->sys_epoll_wait()]\n asmlinkage long sys_epoll_wait(int epfd, struct epoll_event __user *events,\n int maxevents, int timeout)\n {\n int error;\n struct file *file;\n struct eventpoll *ep;\n\n DNPRINTK(3, (KERN_INFO [%p] eventpoll: sys_epoll_wait(%d, %p, %d, %d)\\n,\ncurrent, epfd, events, maxevents, timeout));\n\n /* The maximum number of event must be greater than zero */\n if (maxevents <= 0)\n return -EINVAL;\n\n/* Verify that the area passed by the user is writeable */\n if ((error = verify_area(VERIFY_WRITE, events, maxevents * sizeof(struct\nepoll_event))))\ngoto eexit_1;\n\n /* Get the struct file * for the eventpoll file */\n error = -EBADF;\n file = fget(epfd);\n if (!file)\n goto eexit_1;\n\n /*\n * We have to check that the file structure underneath the fd\n * the user passed to us _is_ an eventpoll file.\n */\nerror = -EINVAL;\n if (!IS_FILE_EPOLL(file))\n goto eexit_2;\n\n /*\n * At this point it is safe to assume that the private_data contains\n * our own data structure.\n */\n ep = file->private_data;\n\n/* Time to fish for events ... */\n error = ep_poll(ep, events, maxevents, timeout);\n\n eexit_2:\n fput(file);\neexit_1:\nDNPRINTK(3, (KERN_INFO [%p] eventpoll: sys_epoll_wait(%d, %p, %d, %d) =\n%d\\n,\n current, epfd, events, maxevents, timeout, error));\n\n return error;\n }

故伎重演,从file->private_data中拿到struct eventpoll,再调用ep_poll

[fs/eventpoll.c-->sys_epoll_wait()->ep_poll()]\n static int ep_poll(struct eventpoll *ep, struct epoll_event __user *events,\n int maxevents, long timeout)\n {\nint res, eavail;\nunsigned long flags;\n long jtimeout;\n wait_queue_t wait;\n\n /*\n * Calculate the timeout by checking for the infinite value ( -1 )\n * and the overflow condition. The passed timeout is in milliseconds,\n * that why (t * HZ) / 1000.\n */\n jtimeout = timeout == -1 || timeout > (MAX_SCHEDULE_TIMEOUT - 1000) / HZ ?\n MAX_SCHEDULE_TIMEOUT: (timeout * HZ + 999) / 1000;\n\nretry:\n write_lock_irqsave(&ep->lock, flags);\n\n res = 0;\n if (list_empty(&ep->rdllist)) {\n /*\n * We dont have any available event to return to the caller.\n * We need to sleep here, and we will be wake up by\n * ep_poll_callback() when events will become available.\n*/\n init_waitqueue_entry(&wait, current);\n add_wait_queue(&ep->wq, &wait);\n\n for (;;) {\n /*\n * We dont want to sleep if the ep_poll_callback() sends us\n * a wakeup in between. Thats why we set the task state\n * to TASK_INTERRUPTIBLE before doing the checks.\n */\n set_current_state(TASK_INTERRUPTIBLE);\n if (!list_empty(&ep->rdllist) || !jtimeout)\n break;\n if (signal_pending(current)) {\n res = -EINTR;\n break;\n }\n\n write_unlock_irqrestore(&ep->lock, flags);\n jtimeout = schedule_timeout(jtimeout);\n write_lock_irqsave(&ep->lock, flags);\n}\n remove_wait_queue(&ep->wq, &wait);\n\n set_current_state(TASK_RUNNING);\n}

又是一个大循环,不过这个大循环比poll的那个好,因为仔细一看——它居然除了睡觉和判断ep->rdllist是否为空以外,啥也没做!什么也没做当然效率高了,但到底是谁来让ep->rdllist不为空呢?答案是ep_insert时设下的回调函数

[fs/eventpoll.c-->sys_epoll_ctl()-->ep_insert()]\n static int ep_insert(struct eventpoll *ep, struct epoll_event *event,\n struct file *tfile, int fd)\n {\n int error, revents, pwake = 0;\n unsigned long flags;\n struct epitem *epi;\nstruct ep_pqueue epq;\n\n error = -ENOMEM;\n if (!(epi = EPI_MEM_ALLOC()))\n goto eexit_1;\n\n /* Item initialization follow here ... */\nEP_RB_INITNODE(&epi->rbn);\nINIT_LIST_HEAD(&epi->rdllink);\n INIT_LIST_HEAD(&epi->fllink);\n INIT_LIST_HEAD(&epi->txlink);\n INIT_LIST_HEAD(&epi->pwqlist);\n epi->ep = ep;\n EP_SET_FFD(&epi->ffd, tfile, fd);\n epi->event = *event;\n atomic_set(&epi->usecnt, 1);\nepi->nwait = 0;\n\n /* Initialize the poll table using the queue callback */\n epq.epi = epi;\n init_poll_funcptr(&epq.pt, ep_ptable_queue_proc);\n\n/*\n* Attach the item to the poll hooks and get current event bits.\n * We can safely use the file* here because its usage count has\n * been increased by the caller of this function.\n */\n revents = tfile->f_op->poll(tfile, &epq.pt);

我们注意init_poll_funcptr(&epq.pt, ep_ptable_queue_proc);这一行,其实就是&(epq.pt)->qproc = ep_ptable_queue_proc;紧接着 tfile->f_op->poll(tfile, &epq.pt)其实就是调用被监控文件(epoll里叫“target file”)的poll方法,而这个poll其实就是调用poll_wait(还记得poll_wait吗?每个支持poll的设备驱动程序都要调用的),最后就是调用ep_ptable_queue_proc。这是比较难解的一个调用关系,因为不是语言级的直接调用。ep_insert还把struct epitem放到struct file里的f_ep_links连表里,以方便查找,struct epitem里的fllink就是担负这个使命的。

[fs/eventpoll.c-->ep_ptable_queue_proc()]\n static void ep_ptable_queue_proc(struct file *file, wait_queue_head_t *whead,\npoll_table *pt)\n {\n struct epitem *epi = EP_ITEM_FROM_EPQUEUE(pt);\n struct eppoll_entry *pwq;\n\n if (epi->nwait >= 0 && (pwq = PWQ_MEM_ALLOC())) {\n init_waitqueue_func_entry(&pwq->wait, ep_poll_callback);\n pwq->whead = whead;\n pwq->base = epi;\n add_wait_queue(whead, &pwq->wait);\nlist_add_tail(&pwq->llink, &epi->pwqlist);\n epi->nwait++;\n } else {\n /* We have to signal that an error occurred */\nepi->nwait = -1;\n }\n }

上面的代码就是ep_insert中要做的最重要的事:创建struct eppoll_entry,设置其唤醒回调函数为ep_poll_callback,然后加入设备等待队列(注意这里的whead就是上一章所说的每个设备驱动都要带的等待队列)。只有这样,当设备就绪,唤醒等待队列上的等待着时,ep_poll_callback就会被调用。每次调用poll系统调用,操作系统都要把current(当前进程)挂到fd对应的所有设备的等待队列上,可以想象,fd多到上千的时候,这样“挂”法很费事;而每次调用epoll_wait则没有这么罗嗦,epoll只在epoll_ctl时把current挂一遍(这第一遍是免不了的)并给每个fd一个命令“好了就调回调函数”,如果设备有事件了,通过回调函数,会把fd放入rdllist,而每次调用epoll_wait就只是收集rdllist里的fd就可以了——epoll巧妙的利用回调函数,实现了更高效的事件驱动模型。现在我们猜也能猜出来ep_poll_callback会干什么了——肯定是把红黑树上的收到event的epitem(代表每个fd)插入ep->rdllist中,这样,当epoll_wait返回时,rdllist里就都是就绪的fd了!

[fs/eventpoll.c-->ep_poll_callback()]\n static int ep_poll_callback(wait_queue_t *wait, unsigned mode, int sync, void *key)\n {\n int pwake = 0;\n unsigned long flags;\n struct epitem *epi = EP_ITEM_FROM_WAIT(wait);\n struct eventpoll *ep = epi->ep;\n\n DNPRINTK(3, (KERN_INFO [%p] eventpoll: poll_callback(%p) epi=%p\nep=%p\\n,\n current, epi->file, epi, ep));\n\n write_lock_irqsave(&ep->lock, flags);\n\n /*\n * If the event mask does not contain any poll(2) event, we consider the\n * descriptor to be disabled. This condition is likely the effect of the\n * EPOLLONESHOT bit that disables the descriptor when an event is received,\n * until the next EPOLL_CTL_MOD will be issued.\n*/\n if (!(epi->event.events & ~EP_PRIVATE_BITS))\n goto is_disabled;\n\n /* If this file is already in the ready list we exit soon */\n if (EP_IS_LINKED(&epi->rdllink))\n goto is_linked;\n\n list_add_tail(&epi->rdllink, &ep->rdllist);\n\nis_linked:\n /*\n * Wake up ( if active ) both the eventpoll wait list and the ->poll()\n * wait list.\n */\n if (waitqueue_active(&ep->wq))\n wake_up(&ep->wq);\n if (waitqueue_active(&ep->poll_wait))\n pwake++;\n\n is_disabled:\n write_unlock_irqrestore(&ep->lock, flags);\n\n /* We have to call this outside the lock */\nif (pwake)\n ep_poll_safewake(&psw, &ep->poll_wait);\n\n return 1;\n }

真正重要的只有 list_add_tail(&epi->rdllink, &ep->rdllist);一句,就是把struct epitem放到struct eventpoll的rdllist中去。现在我们可以画出epoll的核心数据结构图了:

epoll独有的EPOLLET

EPOLLET是epoll系统调用独有的flag,ET就是Edge Trigger(边缘触发)的意思,具体含义和应用大家可google之。有了EPOLLET,重复的事件就不会总是出来打扰程序的判断,故而常被使用。那EPOLLET的原理是什么呢?epoll把fd都挂上一个回调函数,当fd对应的设备有消息时,就把fd放入rdllist链表,这样epoll_wait只要检查这个rdllist链表就可以知道哪些fd有事件了。我们看看ep_poll的最后几行代码:

[fs/eventpoll.c->ep_poll()]\n /*\n * Try to transfer events to user space. In case we get 0 events and\n * theres still timeout left over, we go trying again in search of\n * more luck.\n */\nif (!res && eavail &&\n !(res = ep_events_transfer(ep, events, maxevents)) && jtimeout)\n goto retry;\n\n return res;\n }

把rdllist里的fd拷到用户空间,这个任务是ep_events_transfer做的:

[fs/eventpoll.c->ep_events_transfer()]\n static int ep_events_transfer(struct eventpoll *ep,\nstruct epoll_event __user *events, int maxevents)\n {\n int eventcnt = 0;\n struct list_head txlist;\n\n INIT_LIST_HEAD(&txlist);\n\n /*\n * We need to lock this because we could be hit by\n * eventpoll_release_file() and epoll_ctl(EPOLL_CTL_DEL).\n */\ndown_read(&ep->sem);\n\n/* Collect/extract ready items */\n if (ep_collect_ready_items(ep, &txlist, maxevents) > 0) {\n/* Build result set in userspace */\neventcnt = ep_send_events(ep, &txlist, events);\n\n/* Reinject ready items into the ready list */\nep_reinject_items(ep, &txlist);\n }\n\nup_read(&ep->sem);\n\n return eventcnt;\n}

代码很少,其中ep_collect_ready_items把rdllist里的fd挪到txlist里(挪完后rdllist就空了),接着ep_send_events把txlist里的fd拷给用户空间,然后ep_reinject_items把一部分fd从txlist里“返还”给rdllist以便下次还能从rdllist里发现它。其中ep_send_events的实现:

[fs/eventpoll.c->ep_send_events()]\n static int ep_send_events(struct eventpoll *ep, struct list_head *txlist,\n struct epoll_event __user *events)\n {\n int eventcnt = 0;\n unsigned int revents;\n struct list_head *lnk;\n struct epitem *epi;\n\n /*\n * We can loop without lock because this is a task private list.\n * The test done during the collection loop will guarantee us that\n * another task will not try to collect this file. Also, items\n * cannot vanish during the loop because we are holding sem.\n */\n list_for_each(lnk, txlist) {\n epi = list_entry(lnk, struct epitem, txlink);\n\n /*\n * Get the ready file event set. We can safely use the file\n * because we are holding the sem in read and this will\n * guarantee that both the file and the item will not vanish.\n */\n revents = epi->ffd.file->f_op->poll(epi->ffd.file, NULL);\n\n /*\n * Set the return event set for the current file descriptor.\n * Note that only the task task was successfully able to link\n * the item to its txlist will write this field.\n */\n epi->revents = revents & epi->event.events;\n\n if (epi->revents) {\n if (__put_user(epi->revents,\n &events[eventcnt].events) ||\n __put_user(epi->event.data,\n &events[eventcnt].data))\n return -EFAULT;\n if (epi->event.events & EPOLLONESHOT)\n epi->event.events &= EP_PRIVATE_BITS;\n eventcnt++;\n }\n }\n return eventcnt;\n}

这个拷贝实现其实没什么可看的,但是请注意revents = epi->ffd.file->f_op->poll(epi->ffd.file, NULL);这一行,这个poll很狡猾,它把第二个参数置为NULL来调用。我们先看一下设备驱动通常是怎么实现poll的:

static unsigned int scull_p_poll(struct file *filp, poll_table *wait)\n{\nstruct scull_pipe *dev = filp->private_data;\nunsigned int mask = 0;\n/*\n* The buffer is circular; it is considered full\n* if wp is right behind rp and empty if the\n* two are equal.\n*/\ndown(&dev->sem);\npoll_wait(filp, &dev->inq, wait);\npoll_wait(filp, &dev->outq, wait);\nif (dev->rp != dev->wp)\nmask |= POLLIN | POLLRDNORM; /* readable */\nif (spacefree(dev))\nmask |= POLLOUT | POLLWRNORM; /* writable */\nup(&dev->sem);\nreturn mask;\n}

上面这段代码摘自《linux设备驱动程序(第三版)》,绝对经典,设备先要把current(当前进程)挂在inq和outq两个队列上(这个“挂”操作是wait回调函数指针做的),然后等设备来唤醒,唤醒后就能通过mask拿到事件掩码了(注意那个mask参数,它就是负责拿事件掩码的)。那如果wait为NULL,poll_wait会做些什么呢?

[include/linux/poll.h->poll_wait]\n static inline void poll_wait(struct file * filp, wait_queue_head_t * wait_address,\npoll_table *p)\n {\n if (p && wait_address)\n p->qproc(filp, wait_address, p);\n }

如果poll_table为空,什么也不做。我们倒回ep_send_events,那句标红的poll,实际上就是“我不想休眠,我只想拿到事件掩码”的意思。然后再把拿到的事件掩码拷给用户空间。ep_send_events完成后,就轮到ep_reinject_items了:

[fs/eventpoll.c->ep_reinject_items]\n static void ep_reinject_items(struct eventpoll *ep, struct list_head *txlist)\n {\n int ricnt = 0, pwake = 0;\n unsigned long flags;\n struct epitem *epi;\n\n write_lock_irqsave(&ep->lock, flags);\n\n while (!list_empty(txlist)) {\n epi = list_entry(txlist->next, struct epitem, txlink);\n\n/* Unlink the current item from the transfer list */\n EP_LIST_DEL(&epi->txlink);\n\n/*\n* If the item is no more linked to the interest set, we dont\n * have to push it inside the ready list because the following\n * ep_release_epitem() is going to drop it. Also, if the current\n * item is set to have an Edge Triggered behaviour, we dont have\n * to push it back either.\n */\n if (EP_RB_LINKED(&epi->rbn) && !(epi->event.events & EPOLLET) &&\n (epi->revents & epi->event.events) && !EP_IS_LINKED(&epi->rdllink)) {\n list_add_tail(&epi->rdllink, &ep->rdllist);\nricnt++;\n }\n }\n\n if (ricnt) {\n /*\n* Wake up ( if active ) both the eventpoll wait list and the ->poll()\n * wait list.\n */\n if (waitqueue_active(&ep->wq))\n wake_up(&ep->wq);\n if (waitqueue_active(&ep->poll_wait))\n pwake++;\n }\nwrite_unlock_irqrestore(&ep->lock, flags);\n\n /* We have to call this outside the lock */\n if (pwake)\n ep_poll_safewake(&psw, &ep->poll_wait);\n }

ep_reinject_items把txlist里的一部分fd又放回rdllist,那么,是把哪一部分fd放回去呢?看上面if (EP_RB_LINKED(&epi->rbn) && !(epi->event.events & EPOLLET) &&这个判断——是哪些“没有标上EPOLLET”(标红代码)且“事件被关注”(标蓝代码)的fd被重新放回了rdllist。那么下次epoll_wait当然会又把rdllist里的fd拿来拷给用户了。举个例子。假设一个socket,只是connect,还没有收发数据,那么它的poll事件掩码总是有POLLOUT的(参见上面的驱动示例),每次调用epoll_wait总是返回POLLOUT事件(比较烦),因为它的fd就总是被放回rdllist;假如此时有人往这个socket里写了一大堆数据,造成socket塞住(不可写了),那么(epi->revents & epi->event.events) && !EP_IS_LINKED(&epi->rdllink)) {里的判断就不成立了(没有POLLOUT了),fd不会放回rdllist,epoll_wait将不会再返回用户POLLOUT事件。现在我们给这个socket加上EPOLLET,然后connect,没有收发数据,此时,if (EP_RB_LINKED(&epi->rbn) && !(epi->event.events & EPOLLET) &&判断又不成立了,所以epoll_wait只会返回一次POLLOUT通知给用户(因为此fd不会再回到rdllist了),接下来的epoll_wait都不会有任何事件通知了。

版权声明:CosMeDna所有作品(图文、音视频)均由用户自行上传分享,仅供网友学习交流。若您的权利被侵害,请联系删除!

本文链接://www.cosmedna.com/article/573597486.html